Your employees are already using AI tools you’ve never approved. Here’s what that means and what to do about it.

Shadow AI is the unauthorized use of AI tools, models, or services by employees inside an organization without the knowledge, or approval of IT or security teams. It includes public chatbots, AI browser extensions, code assistants on personal accounts, and AI features embedded in SaaS apps.

The term Shadow AI is quite broad as it encompasses code assistants used on personal accounts, AI-powered browser extensions, writing and translation tools, open-source models run locally on company laptops, and AI features quietly embedded in SaaS applications that activate without IT awareness.

“A staggering 90% of AI usage in the enterprise occurs without the knowledge of security and IT teams.”

How does Shadow AI occur?

Here is a classic example-

A sales manager working at a mid-sized firm has been assigned a task to prepare a proposal for a major client. Due to shortage of time, the sales manager pastes the client’s financial details, internal pricing models, and contract terms into a public AI chatbot to generate a first draft. The intention is to purely meet the deadline but not to leak the information. By the time IT finds out, that sensitive data has already been processed on external servers outside the company’s control. This scenario is not hypothetical. This is exactly what’s happening across organizations right now.

Table of Contents

Shadow AI vs. Shadow IT: key differences

Shadow IT has long been a governance headache employee adopting unauthorized cloud storage, project management apps, or messaging platforms. Shadow AI adds up to every risk of shadow IT.

| Dimension | Shadow IT | Shadow AI |

| Detection difficulty | Moderate, tools leave network traces | High, most AI runs over HTTPS, invisible to firewalls |

| Data exposure risk | Files shared externally | Sensitive data embedded into model inputs |

| Regulatory impact | Data residency, access control | GDPR, HIPAA, EU AI Act compliance failures |

| Speed of proliferation | Weeks to months | Days, no installation required |

How widespread is the problem?

38%acknowledge sharing sensitive work data with AI without employer permission -IBM-sponsored study

37%of organizations have policies to even detect shadow AI- IBM, 2025

The adoption is accelerating fastest among younger employees. IBM research found that 35% of Gen Z workers are likely to use only personal AI applications rather than company-approved ones more than double the rate of other age groups.

Gartner predicts that by 2030, more than 40% of enterprises will experience security or compliance incidents linked to unauthorized shadow AI.

When it comes to sectors, finance, healthcare, and legal face the sharpest exposure since in these industries data sensitivity is highest but AI curiosity among employees is equally strong. The “Bring Your Own AI” (BYOAI) trend is accelerating this further, with employees subscribing personally to frontier AI models like ChatGPT or Claude and accessing them from work devices, completely outside corporate visibility.

Top risks for enterprises

1. Data leakage and IP exposure

- Employees may paste confidential contracts, source code, financial models, or customer data into public AI tools. Once processed, that data can be embedded in model parameters and cannot be retrieved or deleted.

- IBM’s 2025 Cost of a Data Breach Report found that breaches involving shadow AI cost organizations an average of $670,000 more than other incidents. Insider risk driven by AI negligence costs enterprises $10.3 million annually.

2. Regulatory and compliance violations

- GDPR, HIPAA, SOC 2, PCI DSS, and CCPA were not built for AI. Shadow AI sidesteps these frameworks entirely.

- The EU AI Act now requires enterprises to demonstrate governance over AI systems processing regulated data, making undetected shadow AI a direct compliance violation.

3. Expanded attack surface

- Most AI platforms operate over HTTPS, that means standard firewall rules cannot inspect content without SSL inspection.

- As organizations deploy autonomous AI agents, the risk compounds. These systems interact with multiple platforms, creating complex hidden pathways that attackers can exploit at machine speed.

4. Biased or unreliable outputs influencing decisions

An unauthorized AI-driven resume screening tool could introduce hidden bias, leading to discrimination lawsuits. Because it bypassed IT approval, tracking how decisions were made becomes impossible creating both legal and reputational exposure.

5. Hallucinated Outputs in Critical Workflows

When employees rely on unvalidated AI outputs for legal filings, financial disclosures, or medical documentation, errors are not caught before they cause real damage.

6. Vendor Lock-In and Loss of Auditability

Teams that build workflows on unapproved AI tools create invisible dependencies. When a vendor changes its pricing, API, or data policy, the organization has no visibility and no documented process to audit what decisions those tools influenced. Compliance investigations become nearly impossible to resolve.

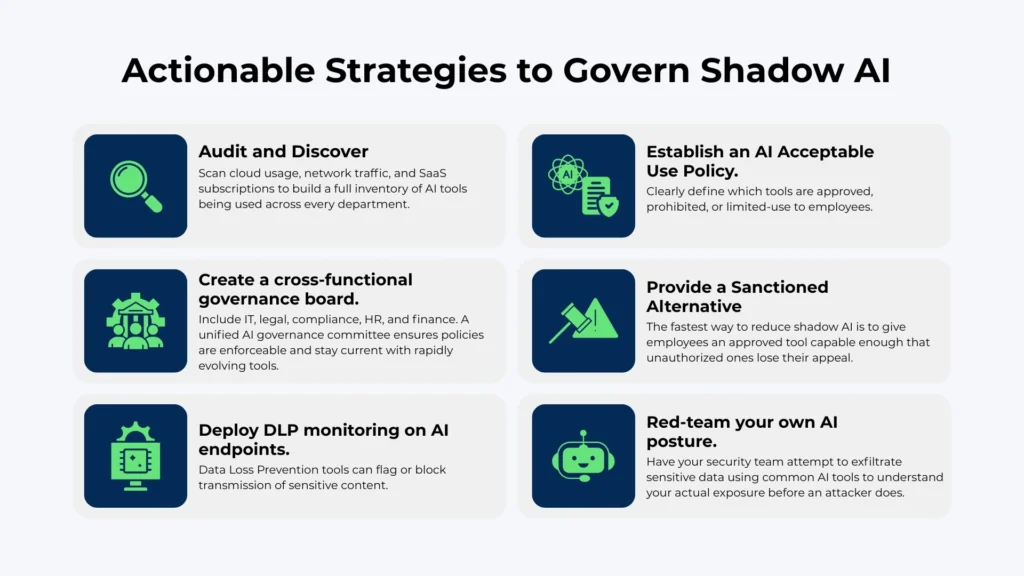

Shadow AI is not a problem that can be solved by banning AI. With more than 80% of employees already using unapproved tools, prohibition would make usage of AI with an underground approach. Measure its scope honestly, and build governance frameworks that channel AI adoption through secure, approved pathways making it easier to do the right thing.

Effective mitigation requires organizations to move beyond reactive prohibition toward a structured governance model. This includes a combination of technical controls, enforceable policy, and employee enablement.